Published On September 28, 2017

RONALD COHN, PEDIATRICIAN-IN-CHIEF of The Hospital for Sick Children in Toronto, tells the story of a young patient who was brought to him with a connective tissue disorder. The logical next step was a genetic test, which would have helped Cohn determine whether the child had a mild or potentially life-threatening strain of the disease. But her parents were wary. “The mother was looking for a new job, and the parents did not have life insurance,” Cohn says. Canada had no legal protections against genetic discrimination, and the parents worried that a positive result for a serious health condition in the family, if the information got out, might be used against them. They ultimately refused the test.

Unlike Canada, the United States has had a genetic nondiscrimination law on the books since 2008. But it is far from airtight, and Robert Green, a medical geneticist at Brigham and Women’s Hospital and professor of medicine at Harvard Medical School in Boston, has come up against a similar reluctance among potential U.S. research participants. According to preliminary results from an ongoing study by Green and his colleagues, more than one in four parents cited fear of genetic discrimination in their decision not to allow genetic screening of their newborn babies.

That reluctance to put genetic data on record may be well justified. Every human genome is an intimate portrait. DNA sequencing can tell researchers where subjects’ ancestors came from and what diseases might come to dominate their lives. As knowledge of how genes shape people continues to evolve, the data may reveal details that are even more personal—geneticists have, for instance, begun to map out genetic precursors of intelligence and violent behavior. It’s reasonable to be cautious that this high-resolution picture, unique as a fingerprint, does not fall into the wrong hands.

Yet continuing to amass a treasure trove of genomic data could hardly be more crucial to science. Genes have become central to how physicians understand many diseases, and the new flowering of genetic databases—large aggregations of genomes tied to medical data about individuals—has allowed great advances. Genetic components of cancer, Alzheimer’s disease and the most puzzling cognitive conditions are beginning to be teased out. And with more and more genomes being sequenced, ready access to that data lets researchers map out what genes do, and is already helping physicians deliver “precision medicine”—prevention and treatment geared to each patient’s unique genetic makeup.

But it’s the very specificity of genomic data that threatens privacy. Although most genomic databases strip away any information linking a name to a genome, such information is very hard to keep anonymous. “I’m not convinced you can truly de-identify the data,” says Mark Gerstein, a Yale professor who studies large genetic databases and is a fierce privacy advocate. He is concerned about whether even the most cutting-edge protections can safeguard personal data. “I am not a believer that large-scale technical solutions or ‘super-encryption’ will solely work,” he says. “There also needs to be a process for credentialing the individuals who access this data.”

Concerns about the privacy of genetic data come at a time when an increasing number of people contribute their genetic information to research, sometimes unwittingly. A few hospitals have begun sequencing the DNA of newborns to assess their genetic risks, and millions of adults have had some form of genetic testing. Others, who have their DNA analyzed by consumer genetics companies such as 23andMe and Ancestry.com for their own purposes, may also allow that data to be used for research. Moreover, to aid in genetic studies, medical centers may add information from patients’ biological samples to their databases. Whole-genome sequencing will soon be available for as little as $100, and a handheld sequencing device is already on the market. All of this contributes to estimates that by 2025, genetic blueprints will exist for almost two billion—a quarter of the world’s population.

In the shadow of the genomic revolution, a quandary has taken root. Sharing this tsunami of genetic data poses a risk to patients, and the size and nature of that threat may not be fully known until significant damage has already been done. The race is on—and moving from several directions—to head off the problem before it really starts.

GENOMIC DATABASES WOULD BE perfectly secure if no one used them. But they exist so that scientists and research institutions can share data in the quest for discovery. The National Institutes of Health, for instance, is collecting the genomic sequences and health data of one million people as a part of the federal Precision Medicine Initiative, and researchers have access to this national resource as they work to identify gene-disease associations and tailor treatments. Yet security is top of mind, and to use the NIH database, researchers must go through an extensive review process to have their proposed studies assessed. Even then, the NIH provides selected applicants access only to limited sets of genetic data—and sometimes only to summaries of that data. The data behind the researchers’ discoveries—not just information about individual subjects, but also summary statistics such as aggregated data averages, minimum and maximum values, and standard deviations—are kept private at publication.

The agency’s tight grip on genetic information is in part a reaction to work by David Craig, professor of Translational Genomics at the Keck School of Medicine at University of Southern California. In 2008, Craig showed it was possible to take someone’s DNA sample and compare it with the summary statistics of an NIH study, sorting through the data until he found a match. This meant that with access to a strand of your hair and to genetic sequencing tools, a hacker with a decent knowledge of statistics could possibly spot your genes in the NIH databases and determine whether they had been used in research about, say, Parkinson’s disease or illegal drug use—details that you might well prefer to keep private.

In the decade since Craig cracked the NIH database, several other researchers have also unmasked the identities behind supposedly anonymized genetic data. Recently, Yaniv Erlich, a computer science professor at Columbia University and a Core Member at the New York Genome Center, showed that it was possible to identify 50 people who had contributed data to the international 1000 Genomes Project. Erlich took genetic sequence data with no names attached—similar to what a researcher with access to a research database would receive—and compared it with public data on a genealogy website that offers a link to surnames. He then cross-referenced these genetic profiles against other databases and public records to narrow in on candidates of the right age, geography and family tree. (He has provided only limited details about his process to prevent nonscientists from copying it.)

So far, only academic researchers have admitted to such sleuthing, undertaken to highlight the vulnerabilities of today’s databases. “But just because academics are the only ones publishing about this doesn’t mean they are the only ones carrying out attacks,” says Alexandra Wood, a fellow at the Berkman Klein Center for Internet & Society at Harvard. “It’s quite likely other people are doing this and learning sensitive information.”

Threats to privacy could multiply once there is an active market for genetic data. Wood speculates that it could be valuable to life insurance companies, which could use it to raise your premiums; or it could become a tool for those who want to prove or disprove paternity. White nationalist groups, who have become preoccupied with genetic testing, might find a way to weaponize the ancestry data the tests can show. It would not be the first time genetic information was used against a race or races. “Genetics has a very troubled history, from Darwin on,” says Yale’s Mark Gerstein.

VIOLATIONS OF GENETIC PRIVACY, whatever form they may take, could even threaten people who opt not to get their genomes sequenced or placed on public websites. The actions of a close relative, for instance, might be enough. A recent article in the Journal of Genetic Counseling, coauthored by identical twins, explored the ethical implications of one twin publishing genetic data that the other might wish to keep private.

Many more people could be affected by the upshot of a recent debate over the Common Rule, a set of regulations that apply to all U.S. government–funded research involving human subjects. The Common Rule, issued in 1991 by 15 federal departments and agencies, outlines the protections of a human subject, including a provision requiring informed consent for using someone’s medical data for research purposes. But the definition of a human subject in the Common Rule is somewhat narrow. Specimens, such as a vial of blood or tumor cells, do not qualify as human subjects as long as researchers cannot “readily ascertain” the identity of the individual contributing them. In those cases, the researcher is not required to get the informed consent of the patient they came from.

The proposed first revision of the rule in 25 years sparked intense debate among ethicists, scientists and patient-privacy advocates. It suggested altering the definition of a human subject, so that all specimens would require consent whether identifiable or not. But that proposal, put forth in 2015, was not adopted in the January 2017 revision. Instead, a panel of experts and stakeholders will now be charged with regularly advising federal agencies in determining what “identifiable” means and how that definition might change, says Heather Pierce, senior director of science policy and regulatory counsel at the Association of American Medical Colleges. This consultation will happen within one year of implementation of the revised Common Rule and at least every four years after that.

For now, at least, “readily identifiable” is a low standard, says Mark Rothstein, professor of law and medicine at Brandeis University in Waltham, Mass., and the founding director of the University of Louisville Institute for Bioethics, Health Policy and Law. Certain coding systems for identifying information are still allowed under the rule, which a determined hacker might be able to crack, according to Rothstein, who says he is “fairly confident” that big research institutions are already tapping medical biospecimens as a key source of genomic data. “I think the idea that we can safely protect the identities of individuals who have contributed biospecimens in perpetuity is not realistic,” Rothstein says.

IF GENETIC DATA DOES get leaked, there are legislative safeguards intended to mitigate the damage. The federal Genetic Information Nondiscrimination Act, or GINA, passed in 2008, prohibits health insurance companies from using information about a genetic risk—a positive test for a mutation of the BRCA1 gene, for example, which conveys a very high risk of breast cancer—to deny coverage or hike premiums. Employers can’t withhold a job, a raise or a promotion because of someone’s genetic makeup, and neither health insurers nor employers can request, purchase or require access to anyone’s genetic information. That prohibition covers not just tests of DNA, but also of RNA, chromosomes, proteins and metabolites. The protections extend to the genetic code or medical history of family members as well.

But other protections slipped through the cracks amid lobbying pressure from business groups. GINA applies only to health insurers and employers; it wouldn’t stop a long-term-care insurance company from penalizing someone for a genetic predisposition to Alzheimer’s disease, for example. And last year, Fast Company wrote about a 36-year-old woman whose life insurance application was legally denied because testing revealed she had the BRCA1 gene mutation.

“The law was meant to reassure people that the world is safe for genetic medicine,” says Rothstein. But insurance companies and other commercial entities not covered by GINA have plenty of incentives to profit from someone’s genetic data in ways that could be harmful.

And now, even GINA’s limited protections face rollbacks. A Republican bill introduced in March would allow companies to require employees to undergo genetic testing and provide health information about themselves and their family members through wellness programs—or face fines. Under that bill, employers could levy significant penalties for not participating in a “voluntary” wellness program—increasing premiums for health insurance by up to 50%, or an average of $5,000 per family. The bill would then allow employers to share health or genetic information collected through wellness programs with business partners that help administer the programs. Such outside companies often sell employee information, and that could happen with genetic data, too.

ALL OF THESE DEVELOPMENTS add urgency to the need to safeguard research databases and the people who contribute to them. George Church, a geneticist at Harvard, has taken one approach with his Personal Genome Project, which has collected genomic data and information about health and personal traits from about 5,000 volunteers. Because he considers it naïve to promise complete privacy to research subjects who contribute genomic data, he has chosen to be as transparent as possible about potential risks and about how data will be used. The project’s genomes are available to the public, with the data anonymized as well as possible. But before volunteers are allowed to participate, they must pass a test about the potential privacy risks of releasing their genomes—with a perfect score. “We recruit people with the understanding that the data will be reidentified,” Church says.

Others are working on solutions that could help genetic research co-exist with true genetic privacy. One promising option may be blockchain, a complex technology often used to safeguard digital financial information, notably for the digital currency bitcoin. Blockchain is considered so reliable and hack-proof that the U.S. military’s research arm—the Defense Advanced Research Projects Agency, or DARPA—is looking into how it could be used to guard nuclear secrets.

Encrypgen, a new software firm, aims to apply blockchain technology to store genetic data securely; Genetic Alliance, an umbrella group for patient-advocacy organizations, and Global Alliance for Genomics and Health, a data-sharing network that connects genetic researchers around the world, are also looking into it. A “private” blockchain might give multiple, far-flung researchers access to a wealth of genetic data without making that data vulnerable to hacking.

Another possible solution “smudges” genomic data just enough to keep a hacker from determining an individual’s identity. Last year, researchers from MIT described a method of adding “differential privacy” to genomic data sets. This slightly scrambles the data, so that individual DNA sequences become unrecognizable but still of use to researchers.

Yet Columbia’s Yaniv Erlich and others, including Church, fear differential privacy could compromise biomedical research, with smudged data making it harder to get clear results. Mark Gerstein at Yale believes that scientists would be better off testing hypotheses on small amounts of publicly available but pure data, even if it’s not representative of the overall population, rather than using larger quantities of imperfect data.

Describing another way to safeguard genetic data, a February paper in The American Journal of Human Genetics showed how game theory might be used to design ideal privacy settings for a genetic research database, with just enough protection to keep sensitive data from leaking yet open enough to make data easily accessible for research. Game theory uses mathematical modeling to gauge conflicts among rational participants, and for this study, researchers created an equation that modeled the risks, benefits and uncertainties of various data-sharing policies. Such a tool could enable those designing privacy policies to test and refine them.

That kind of decision-making—one that takes many actors and motives into account—may be the best way to think about the problem. Physicians want to make people better, researchers want to find cures, and patients want to ensure that the care they receive stays private. For now, research databases represent a trade-off among those needs.

Stronger privacy laws may be essential alongside improved technology. Earlier this year, Canada passed a genomic-privacy act that protects against discrimination not just from employers, but from life insurers and long-term disability insurers, as well. (Universal health care in Canada guarantees that genomic privacy is not a problem for health insurance.)

Ronald Cohn from The Hospital for Sick Children says that since that law was approved, nine families of patients in his clinic who refused genomic testing have now undergone it. The family with the child who has the connective tissue disorder hasn’t come back yet, but Cohn is confident they will. Cohn testified in favor of the new law, and he believes clinicians have an important role to play in promoting privacy protection. In the United States, the research community and physicians may need to work together to ensure that the problem of genomic privacy doesn’t slow the progress on today’s most promising research.

Dossier

“My Identical Twin Sequenced our Genome,” by Samantha L.P. Schilit and Arielle S. Nitenson, Journal of Genetic Counseling, April 2017. This article discusses the implications of genomic sequencing for close family members and ethics surrounding consent.

“Differential Privacy: A Primer for a Non-technical Audience (Preliminary Version),” by Kobbi Nissim et al., Harvard University, Privacy Tools for Sharing Research Data Project, March 2017. This document explains how differential privacy, a mathematical framework for improving privacy protection when analyzing or releasing statistical data, works.

NIH: National Human Genome Research Institute, www.genome.gov. The NHGRI funds and conducts research to uncover the role that the genome plays in human health and disease.

Stay on the frontiers of medicine

Related Stories

- Please Share

Troves of data are gathered during clinical trials, but most of it stays locked away. Could freeing it lead to new cures?

- Grand Theft Medical

The move to electronic medical records may be helping identity thieves.

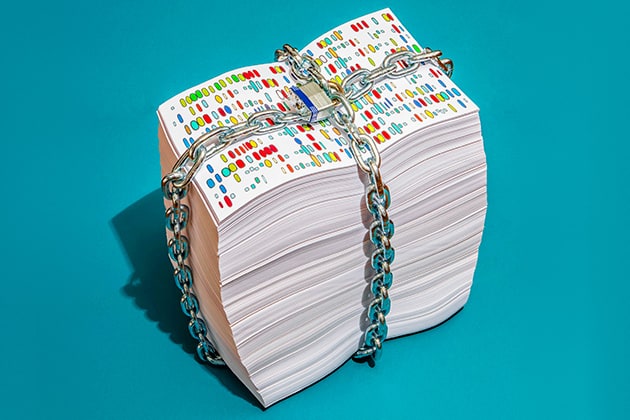

- Under Lock and Key?

Genetic databases have helped medicine make great leaps forward. But is it really possible to keep the identities behind those genes a secret?